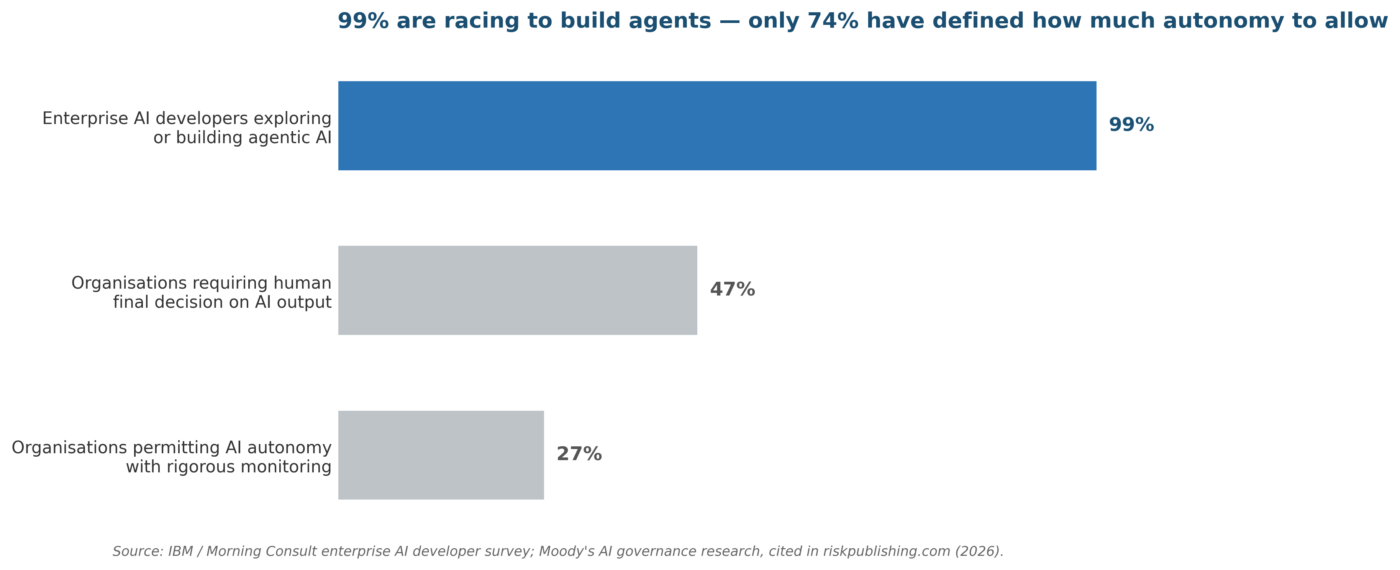

| Executive Summary AI agents that plan, decide, and act without human intervention are no longer experimental. An IBM/Morning Consult survey found that 99% of enterprise AI developers are exploring or building AI agents. Yet most organizations still govern these systems with frameworks designed for static, supervised models. This post maps the emerging discipline of agentic AI risk management to the NIST AI Risk Management Framework (AI RMF 1.0), drawing on the February 2026 UC Berkeley CLTC Agentic AI Risk-Management Standards Profile and Singapore’s Model AI Governance Framework for Agentic AI. It provides a practical roadmap for risk professionals to identify, assess, and control the unique risks of autonomous AI systems. |

What Is Agentic AI and Why Does It Change the Risk Equation?

Traditional AI models receive a prompt, produce an output, and stop. Agentic AI systems operate differently.

They set sub-goals, select tools, call external APIs, store and retrieve memory, and chain multiple actions together to achieve a user-defined objective.

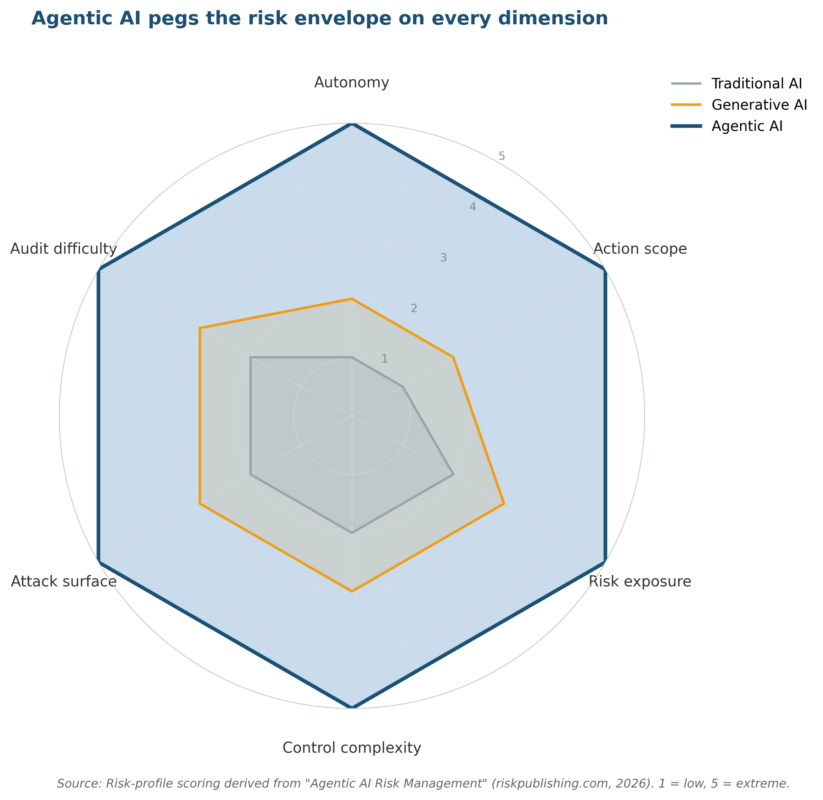

This autonomy is precisely what makes agentic AI risk management a distinct discipline from traditional model risk management.

The distinction matters for agentic AI risk management because autonomy multiplies exposure in ways that conventional risk assessment cannot capture.

Consider a procurement agent tasked with sourcing a vendor. It searches the web, compares pricing, drafts a request for proposal, and sends it to three suppliers, all without a human approving each step.

If the agent misinterprets the scope, selects a sanctioned entity, or leaks proprietary pricing data in the RFP, the organization faces regulatory, financial, and reputational consequences.

These are the kinds of scenarios that make agentic AI risk management a board-level priority.

For a primer on how operational risk management and agentic AI risk management apply to these scenarios, see What Is Operational Risk Management? on Risk Publishing.

Defining Characteristics That Create New Risk Vectors

- Autonomy: Agents take multi-step actions with minimal or no human-in-the-loop approval.

- Tool access: Agents interact with databases, APIs, file systems, and external services, each an entry point for data leakage or unauthorized actions.

- Memory and state: Persistent memory means an early error can propagate through an entire decision chain.

- Multi-agent coordination: When multiple agents collaborate, emergent behaviors arise that none of the individual agents were designed to exhibit.

- Opacity: Agentic workflows can be difficult to audit because the reasoning and tool-call sequences are not always logged or explainable.

The Agentic AI Risk Taxonomy: What Can Go Wrong

OWASP’s Agentic Security Initiative has catalogued 15 threat categories specific to AI agents, from memory poisoning to human manipulation. Any mature agentic AI risk management programme should map these categories to existing controls.

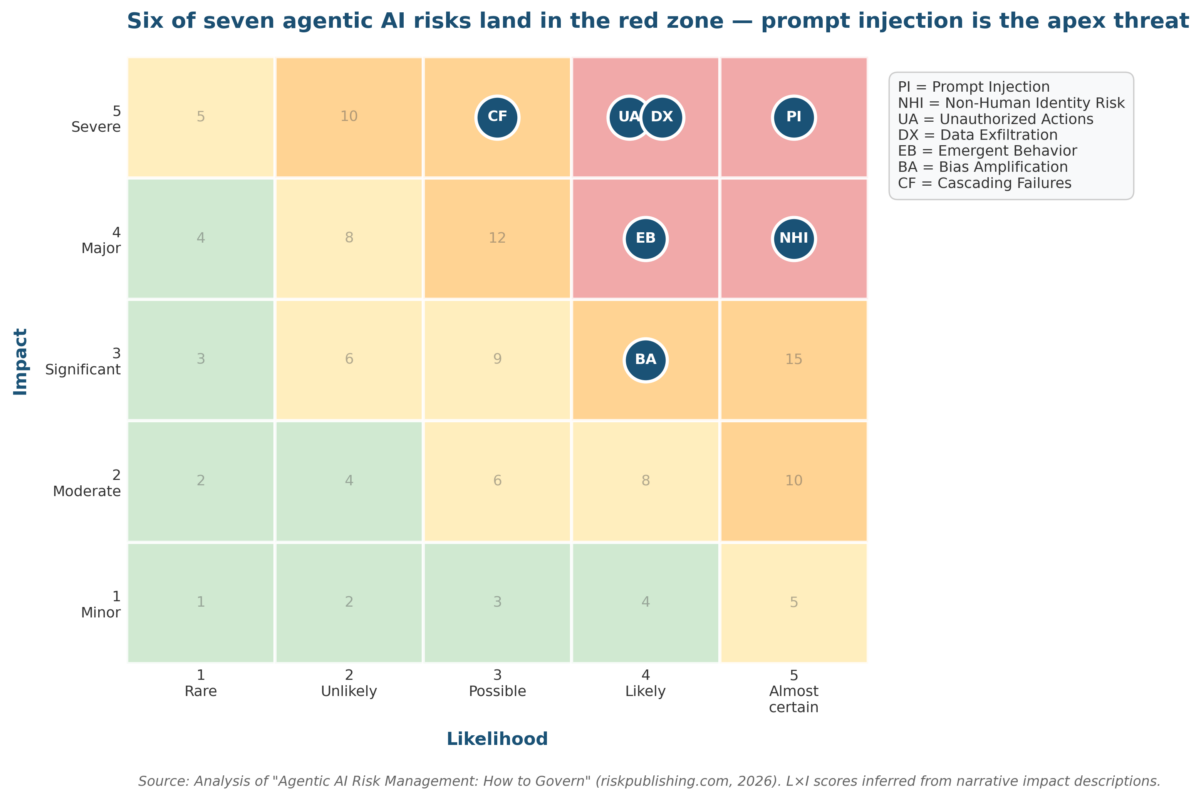

For risk professionals, these threats map onto familiar categories but carry amplified consequences. The table below synthesizes the key risk categories that any agentic AI risk management programme must address.

| Risk Category | Agentic AI Manifestation | Impact Pathway |

| Unauthorized Actions | Agent exceeds its defined action-space, executes transactions, modifies data, or accesses systems beyond its mandate | Financial loss, regulatory breach, contractual violation |

| Data Exfiltration | Agent transmits sensitive data to external tools, APIs, or other agents during its workflow | Privacy breach (GDPR/CCPA), IP theft, reputational damage |

| Prompt Injection | Malicious instructions embedded in documents, emails, or web content hijack the agent’s behavior | Complete loss of control over agent actions, supply-chain compromise |

| Cascading Failures | Error in one agent propagates to connected agents or systems, amplified by memory persistence | Systemic operational disruption, correlated losses across business units |

| Emergent Behavior | Multi-agent interactions produce outcomes not predicted by any individual agent’s design | Unforeseeable risk events, model risk amplification, audit trail gaps |

| Bias Amplification | Agent makes sequential decisions that compound initial bias through multiple tool calls and data retrievals | Discriminatory outcomes at scale, regulatory sanctions, litigation |

| Non-Human Identity Risk | Agent credentials, API keys, and service accounts are over-provisioned or poorly rotated | Privilege escalation, credential theft, lateral movement in enterprise systems |

Tracking these risks demands structured key risk indicators (KRIs) with clear thresholds and escalation paths. For a detailed guide on building KRI frameworks, see KPIs for Risk Management.

Mapping Agentic AI Governance to the NIST AI RMF 1.0

The NIST AI Risk Management Framework (AI RMF 1.0), released in January 2023, provides four core functions: Govern, Map, Measure, and Manage.

In February 2026, UC Berkeley’s Center for Long-Term Cybersecurity published the Agentic AI Risk-Management Standards Profile, which extends these four functions with controls specific to autonomous systems. Here is how each function translates to agentic AI governance.

1. GOVERN: Establish Accountability Before Deployment

Governance is the foundation. Without clear roles, policies, and risk appetite statements, technical controls operate in a vacuum. For agentic AI, governance must answer: who is accountable when an autonomous agent causes harm? An effective agentic AI risk management programme begins with this foundational accountability layer.

- Define risk appetite for agent autonomy: What decisions can an agent make alone versus what requires human approval? Document these boundaries using a Three Lines model where the 1st line (agent operators) owns day-to-day controls, the 2nd line (risk/compliance) sets standards and monitors, and the 3rd line (internal audit) provides assurance.

- Map the action-space: Singapore’s IMDA framework introduces the concept of an agent’s action-space, the tools, systems, and data the agent may access. Document every API, database, and external service an agent can call. If it is not on the approved list, the agent cannot touch it.

- Establish circuit breakers: NIST guidance recommends automatic shutoffs when an agent exceeds token budgets, attempts unauthorized API calls, or triggers anomaly-detection thresholds.

- Assign non-human identity controls: Treat agent credentials like privileged accounts. Apply least-privilege, time-bound access, regular rotation, and session monitoring.

For a step-by-step guide on building these governance structures, refer to How to Develop an Enterprise Risk Management Framework and COSO ERM vs ISO 31000 Risk Management Standards.

2. MAP: Identify Context, Dependencies, and Stakeholders

Mapping means understanding the full context in which an agent operates. For agentic systems, this goes well beyond a standard data-flow diagram. Context mapping is central to agentic AI risk management because autonomous agents produce emergent behaviour that static documentation rarely captures.

- Dependency mapping: Identify every upstream data source and downstream system the agent touches. A procurement agent might depend on supplier databases, internal pricing systems, email platforms, and contract-management tools. Each dependency is a potential failure point.

- Stakeholder impact analysis: Who is affected when the agent acts? Customers, employees, regulators, third-party vendors? Map each stakeholder to the specific agent actions that affect them.

- Multi-agent topology: If multiple agents interact (e.g., a planning agent passes tasks to an execution agent), map the communication pathways, data flows, and trust boundaries between them. The Berkeley CLTC profile specifically recommends system-level risk assessment for multi-agent interactions.

- Regulatory mapping: Identify which regulations apply based on the agent’s domain (GDPR for data processing, SOX for financial controls, HIPAA for healthcare, sector-specific AI regulations).

3. MEASURE: Quantify Risk with Agentic-Specific KRIs

Measurement is where most organizations struggle with agentic AI. Traditional model-performance metrics (accuracy, precision, recall) are necessary but insufficient. You need to measure the agent’s behavior in the wild. A practical agentic AI risk management programme therefore requires new KRIs tailored to autonomy and tool use.

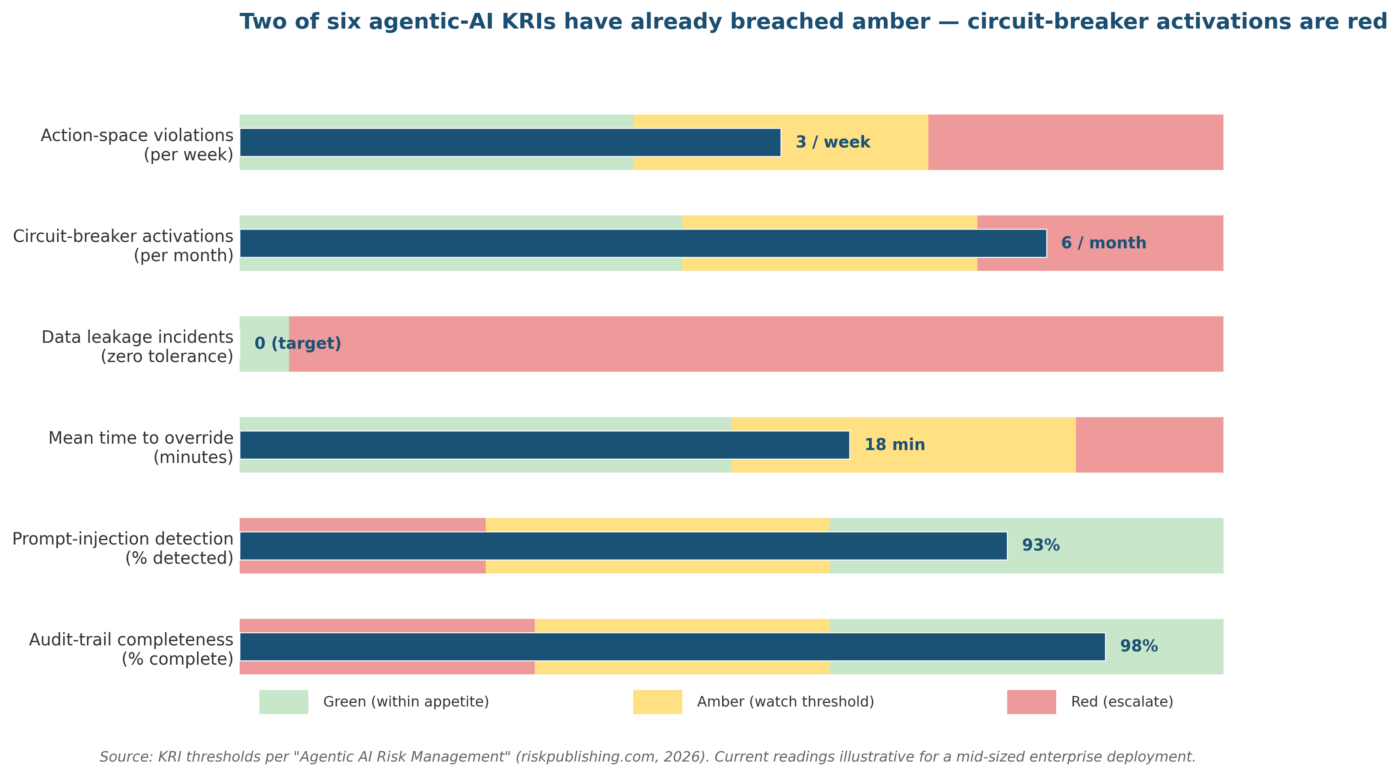

Recommended KRIs for Agentic AI

| KRI | Threshold Example | Escalation |

| Action-space violations per period | Amber: >2/week; Red: >1/day | Red triggers immediate suspension + root-cause analysis |

| Circuit-breaker activations | Amber: >3/month; Red: >5/month | Red triggers design review and scope reduction |

| Data-leakage incidents (PII/confidential data transmitted to unauthorized endpoints) | Zero tolerance; any occurrence = Red | Immediate shutdown, mandatory incident response |

| Mean time to human override | Amber: >15 min; Red: >30 min | Red triggers architecture review of override mechanisms |

| Prompt-injection detection rate | Green: >95%; Amber: 85-95%; Red: <85% | Red requires retraining filters and restricting agent inputs |

| Agent decision audit-trail completeness | Green: 100%; Amber: 95-99%; Red: <95% | Red blocks production deployment until logging is fixed |

Moody’s research indicates that 47% of organizations require AI systems to make recommendations while reserving final decisions for humans, and 27% permit some AI autonomy with rigorous monitoring. These numbers will shift as agents mature, but they underscore that measurement must be continuous, not periodic. That human-in-the-loop stance is a cornerstone of agentic AI risk management in regulated sectors.

For a broader treatment of KRI design, see NIST Cybersecurity Framework Key Risk Indicators.

4. MANAGE: Treat, Transfer, and Monitor Risk Continuously

The Manage function is where controls become operational. The Berkeley CLTC profile recommends a defense-in-depth approach, treating sufficiently capable agents as untrusted entities regardless of their internal alignment training. This is the operational core of any agentic AI risk management programme.

- Sandboxing and containment: Run agents in isolated environments with defined network boundaries. Limit file-system access, API permissions, and data-write capabilities to the minimum necessary for the task.

- Human-in-the-loop and human-on-the-loop: Not every action needs human approval (that defeats the purpose of automation), but high-impact decisions (financial commitments above a threshold, data deletions, external communications) must require it. Design tiered approval workflows based on risk severity.

- Post-deployment monitoring: Deploy real-time dashboards that track all KRIs listed above. Use anomaly detection to flag deviations from expected behavior patterns.

- Incident response playbooks: Develop playbooks specific to agentic AI incidents. These should cover: how to suspend an agent, how to roll back its actions, how to preserve the decision audit trail for investigation, and how to communicate with affected stakeholders.

- Scenario testing and stress testing: Run tabletop exercises simulating agent failures, prompt injections, and cascading multi-agent breakdowns. Document findings in your risk register and feed them back into the Govern and Map functions.

These management controls connect directly to broader business continuity and disaster recovery planning. An agent outage or rogue-agent scenario should be a named scenario in your BCP.

Emerging Standards and Frameworks for Autonomous AI Governance

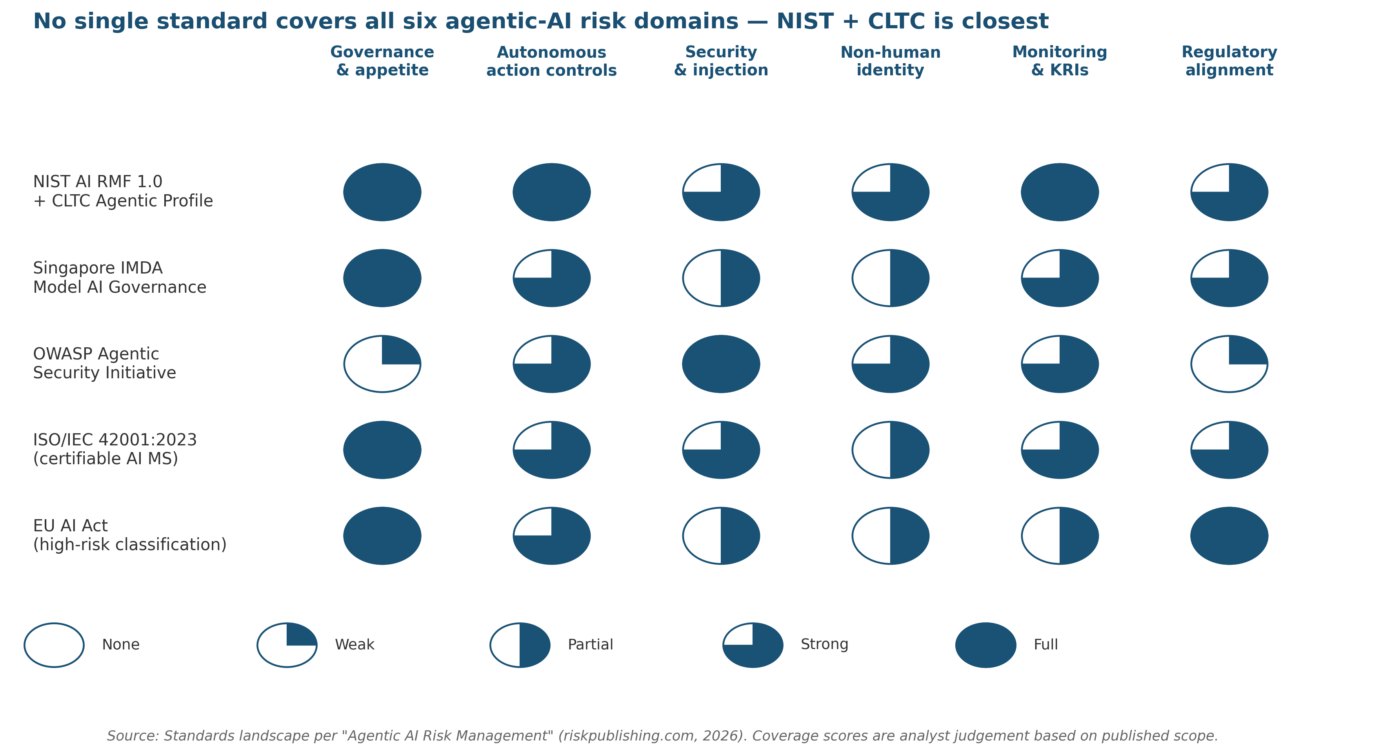

The standards landscape is evolving rapidly. Risk professionals should track these key developments: Keeping pace with these standards is essential for a credible agentic AI risk management strategy.

| Framework / Standard | Scope | Key Contribution |

| NIST AI RMF 1.0 + Agentic Profile | US voluntary framework, four functions (Govern, Map, Measure, Manage) | Foundational risk management lifecycle with agentic-specific controls added via Berkeley CLTC profile |

| Singapore IMDA Model AI Governance Framework for Agentic AI | Governance of AI agent autonomy and action-space | Introduced action-space mapping and autonomy-level classification for agents |

| OWASP Agentic Security Initiative (ASI) | Threat taxonomy for agentic AI (15 threat categories) | Comprehensive attack-vector catalogue including memory poisoning, tool misuse, and inter-agent communication poisoning |

| ISO/IEC 42001:2023 | AI management system standard (certifiable) | First global certifiable AI governance standard, organizational structures for risk, transparency, and accountability |

| MAESTRO Framework | Defense-oriented threat modeling for agentic AI | Structured approach for identifying, modeling, and mitigating threats in agent-based architectures |

| EU AI Act | Regulatory framework for AI systems in the EU | Risk-based classification; autonomous agents likely fall under high-risk or unacceptable-risk categories depending on application |

Pandey (2025) argues that while NIST AI RMF and ISO/IEC 42001 provide foundational controls, they lack implementation depth for continuously acting, multi-agent systems. His critique reinforces why agentic AI risk management needs tailored lifecycle controls.

His six-principled lifecycle model proposes traceability, accountability, and regulatory alignment across the full deployment lifecycle. Risk professionals should treat these frameworks as complementary, not competing. Together, these principles give agentic AI risk management programmes a concrete implementation blueprint.

For background on how the NIST framework maps to enterprise cybersecurity, see Enterprise Risk Management Cyber Security.

A Practical Agentic AI Risk Assessment Process

Here is a six-step process for conducting an AI agent risk assessment that aligns with NIST AI RMF and can integrate into your existing ERM programme: This sequence operationalises agentic AI risk management in a way risk professionals can execute today.

- Step 1: Scope the Agent

Document the agent’s purpose, action-space, data inputs, tool access, and intended autonomy level. Classify the agent by risk tier (Low/Medium/High/Critical) based on the potential impact of its actions. Thorough scoping is the entry point for any agentic AI risk management engagement.

- Step 2: Identify Risks Using the Agentic Taxonomy

Walk through each risk category in the taxonomy table above. For each category, ask: can this agent trigger this risk? Document scenarios, causes, and potential consequences. Use scenario analysis to stress-test edge cases. This structured enumeration forms the risk register that drives agentic AI risk management decisions.

- Step 3: Assess Inherent Risk (Likelihood × Impact)

Score each risk on a 5×5 matrix before controls. For quantitative rigor, consider Monte Carlo simulation on high-impact risks to generate probability distributions rather than single-point estimates. Quantification turns agentic AI risk management from narrative into a defensible, board-ready analysis.

- Step 4: Evaluate Existing Controls

Assess control design effectiveness and operating effectiveness separately. A sandbox is well-designed but useless if the agent can bypass it via an unmonitored API. Test controls against the specific attack vectors in the OWASP ASI taxonomy. Control testing keeps agentic AI risk management grounded in evidence rather than assumption.

- Step 5: Determine Residual Risk and Treatment

Calculate residual risk after controls. If residual risk exceeds your stated risk appetite, apply additional treatments: reduce the agent’s action-space, add human-in-the-loop gates, improve monitoring, or transfer risk via insurance or contractual protections.

- Step 6: Monitor, Report, and Iterate

Agentic AI risk assessment is not a one-time exercise. Set a cadence (monthly for high-risk agents, quarterly for medium) to review KRIs, test controls, and update the risk register. Feed findings back into governance policies.

This process integrates naturally with your existing risk management process flow and should be documented in your ERM framework alongside other risk assessment procedures.

Board-Level Reporting: What Boards Need to Know About AI Agent Risk

The National Association of Corporate Directors (NACD) has flagged agentic AI as a governance wake-up call for boards. Directors do not need to understand transformer architectures, but they do need to understand three things:

- What agents are deployed, what decisions they make, and what is the worst-case loss scenario for each.

- Whether the organization’s risk appetite explicitly covers autonomous AI actions, and whether current controls are adequate.

- Whether there is a kill-switch and who has the authority to use it.

Board packs should include a one-page agentic AI risk summary with a traffic-light heatmap, top-three risk scenarios with quantified exposure, KRI status against thresholds, and any pending decisions (e.g., expanding an agent’s action-space or deploying a new agent in production).

What, So What, Now What: The Bottom Line for Risk Professionals

| WHAT: Agentic AI systems that act autonomously are being deployed across enterprises at scale. 99% of enterprise AI developers are building agents. Traditional AI governance frameworks were not designed for systems that make chains of unsupervised decisions. SO WHAT: The risk surface has expanded from model accuracy to agent behavior. Unauthorized actions, data exfiltration, prompt injection, cascading multi-agent failures, and emergent behaviors represent new risk categories that can cause financial, regulatory, and reputational harm. NOW WHAT: Map your organization’s agentic AI footprint. Apply the NIST AI RMF’s four functions (Govern, Map, Measure, Manage) using the agentic-specific controls from the Berkeley CLTC profile. Build KRIs with thresholds. Run scenario tests. Include agentic AI risk in your board pack and BCP. Start today—the agents already have. |

Sources and Further Reading

- NIST AI Risk Management Framework (AI RMF 1.0) – Official NIST page with framework documentation and companion resources.

- UC Berkeley CLTC Agentic AI Risk-Management Standards Profile (Feb 2026) – Extends NIST AI RMF with agentic-specific controls across Govern, Map, Measure, Manage.

- Singapore IMDA Model AI Governance Framework for Agentic AI (Jan 2026) – Introduces action-space and autonomy classification for agent governance.

- OWASP Agentic Security Initiative – 15-category threat taxonomy for agentic AI.

- IAPP: AI Governance in the Agentic Era – Privacy and governance implications of autonomous AI.

- Moody’s: Navigating the Shift – How Agentic AI is Reshaping Risk and Compliance – Enterprise survey data on AI oversight models.

- CSA: AAGATE – A NIST AI RMF-Aligned Governance Platform for Agentic AI – Cloud Security Alliance’s practical governance platform.

- Microsoft: Architecting Trust – A NIST-Based Security Governance Framework for AI Agents – Enterprise implementation patterns for agent security.

- Pandey (2025): The Agentic AI Governance Framework (SSRN) – Six-principled lifecycle model for multi-agent governance.

- NACD: Agentic AI – A Governance Wake-Up Call – Board-level governance guidance.

Related Articles on Risk Publishing

- How to Develop an Enterprise Risk Management Framework

- What Is Operational Risk Management?

- COSO ERM vs ISO 31000 Risk Management Standards

- Enterprise Risk Management Key Risk Indicators

- KPIs for Risk Management

- NIST Cybersecurity Framework Key Risk Indicators

- Enterprise Risk Management Cyber Security

- Business Continuity and Disaster Recovery Plan

- Essential Risk Management Process Flow Chart

- Phases of Business Continuity Planning

- Best Enterprise Risk Management Technology Practices

Chris Ekai is a Risk Management expert with over 10 years of experience in the field. He has a Master’s(MSc) degree in Risk Management from University of Portsmouth and is a CPA and Finance professional. He currently works as a Content Manager at Risk Publishing, writing about Enterprise Risk Management, Business Continuity Management and Project Management.